What exactly is the "Oversight Gap" that AI Disclosure Network solves?

+

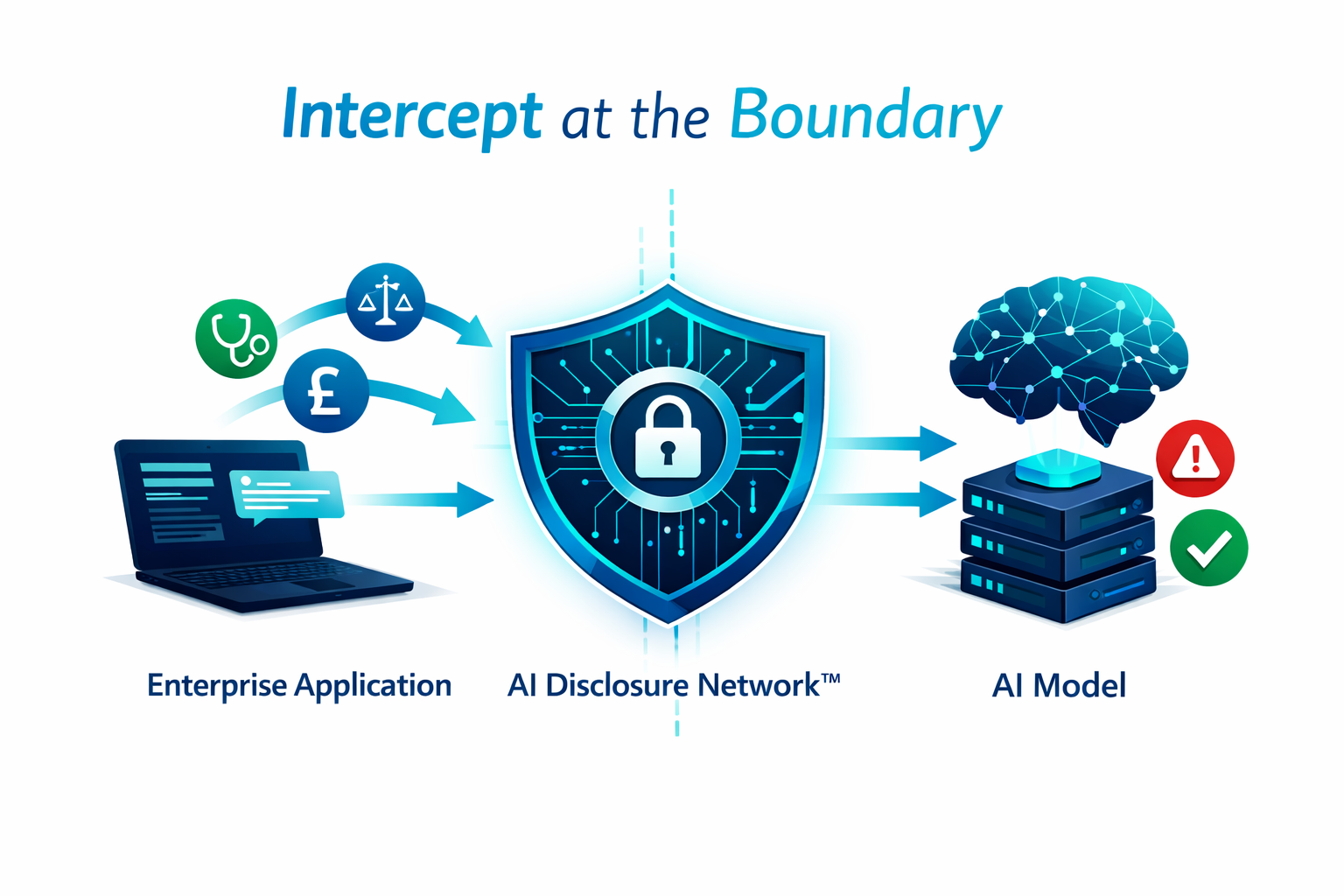

The Oversight Gap refers to the systemic vulnerability created when organisations deploy Generative AI without adequate governance. Over 71% of UK employees use unapproved "Shadow AI" tools, routinely pasting sensitive client data, internal trade secrets, and regulated information into public LLM interfaces. Without a governance gateway, this data crosses organisational boundaries undetected, creating data breach liability and regulatory non-compliance. AI Disclosure Network™ closes this gap by operating at the prompt boundary itself — the only point where intervention is both technically effective and operationally seamless.

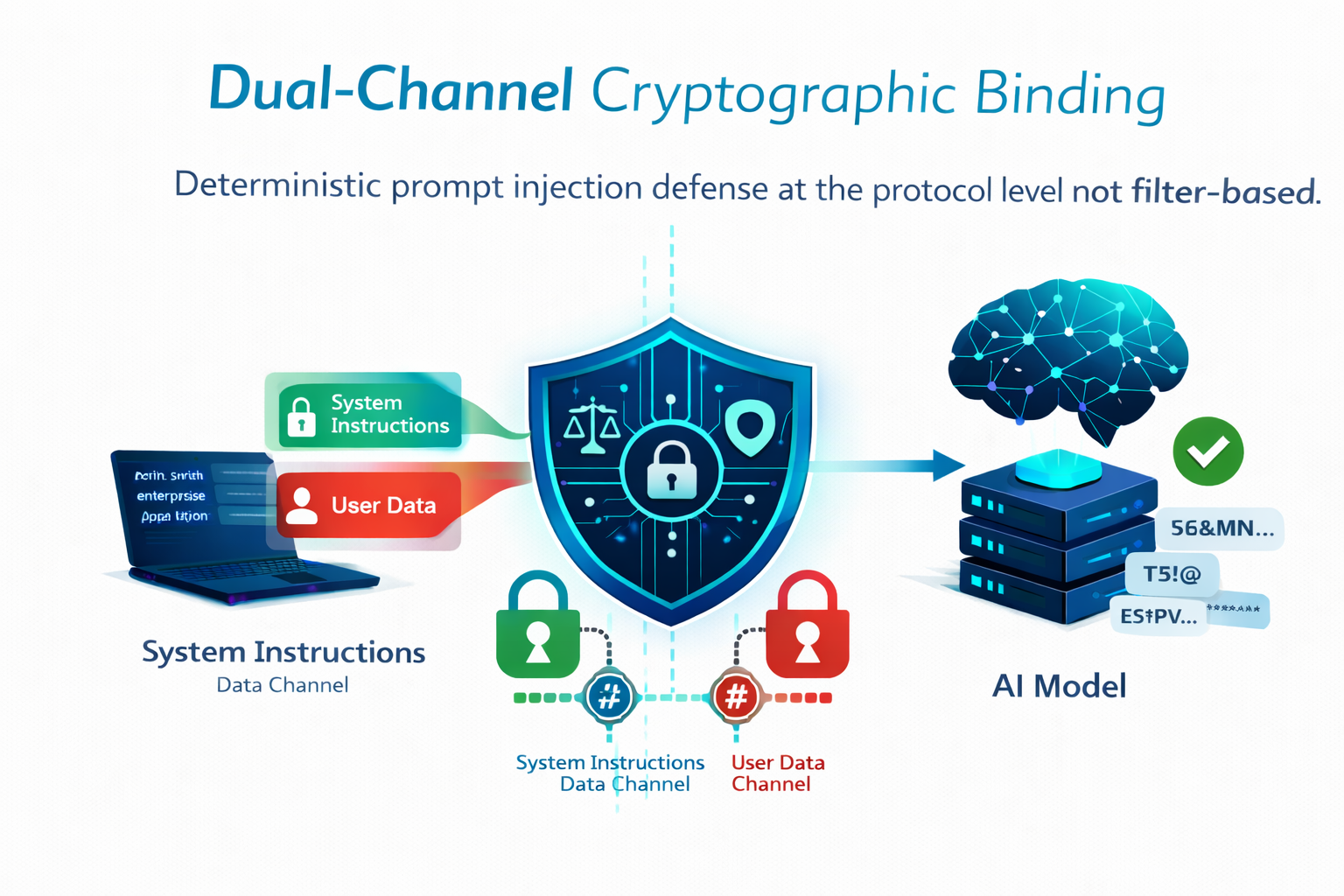

How does Dual-Channel Cryptographic Binding prevent prompt injection?

+

Traditional guardrail models try to detect malicious prompts using semantic filters but these can be bypassed via obfuscation or many-shot jailbreaking techniques. Our Dual-Channel Prompt Packaging physically separates system instructions from user-provided data into two independent channels, bound by hash-based cryptographic signatures at the proxy level. The model processor cannot merge or re-interpret safety instructions with user data. This makes prompt injection a structural impossibility at the protocol level — not a probabilistic challenge that clever phrasing can bypass. It is a deterministic defence, not a best-effort filter.

Will the gateway affect our AI workflow performance or user experience?

+

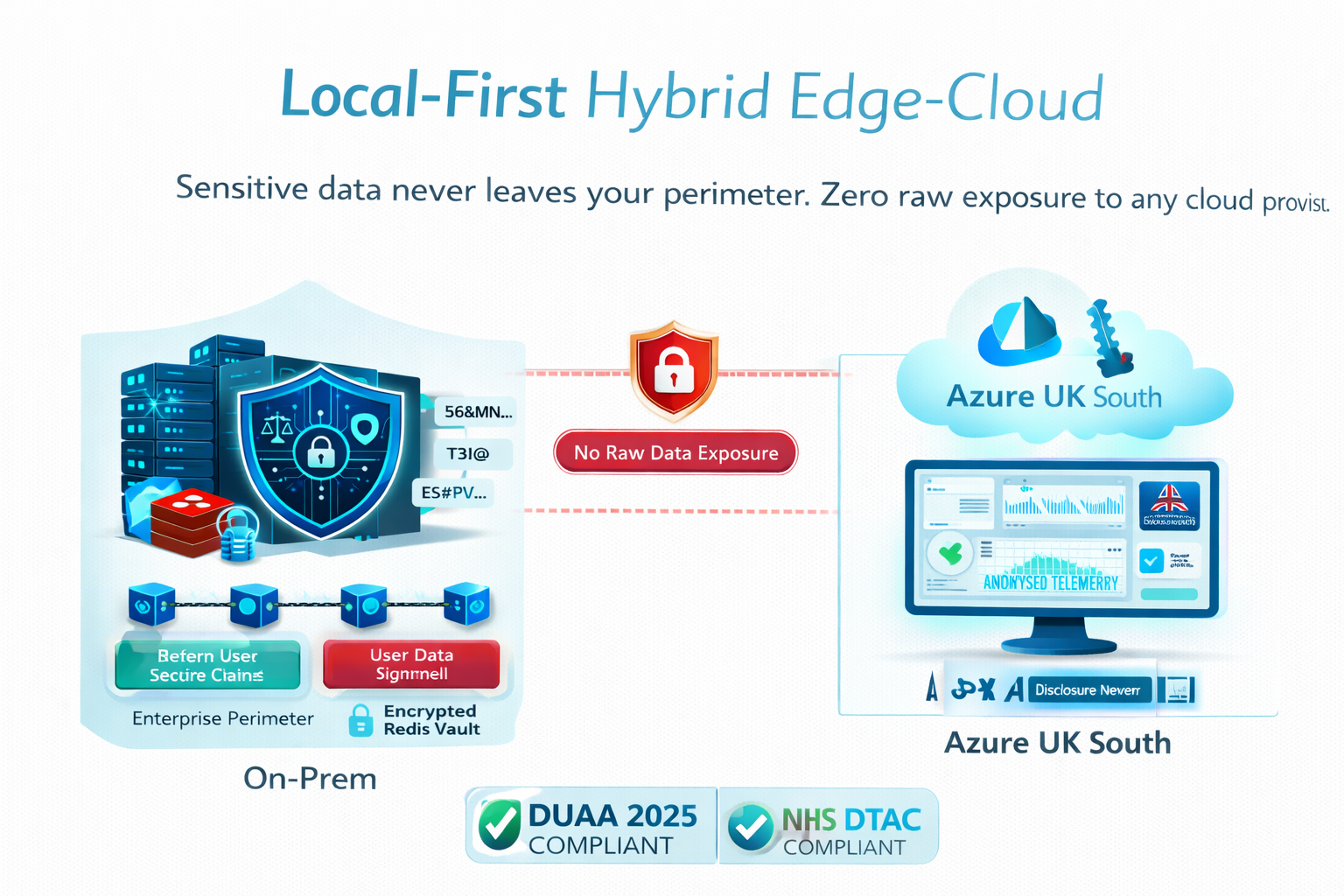

No. The gateway is engineered specifically for high-frequency enterprise environments. Our TRL 7 prototype has been validated at sub-150ms conversational overhead in simulated trading and advisory environments well below the threshold of perceptible latency for professional users. The "droppable proxy" architecture requires zero code changes to existing AI applications. The local-first processing model also offloads the most compute-intensive tasks (entity detection and vaulting) to the client's own infrastructure, ensuring the governance layer does not introduce cloud-dependent bottlenecks.

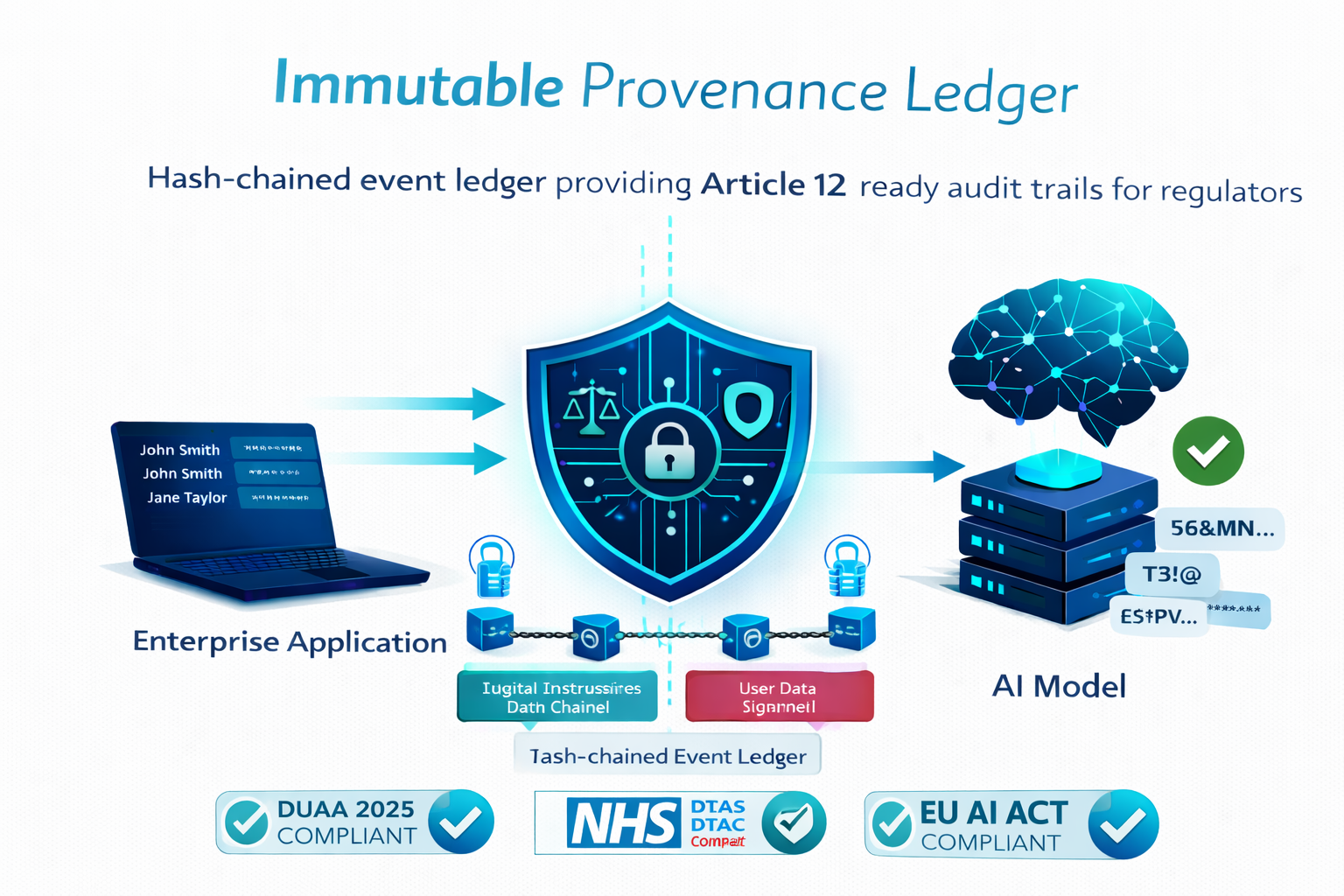

How does the platform satisfy the DUAA 2025 and NHS DTAC requirements?

+

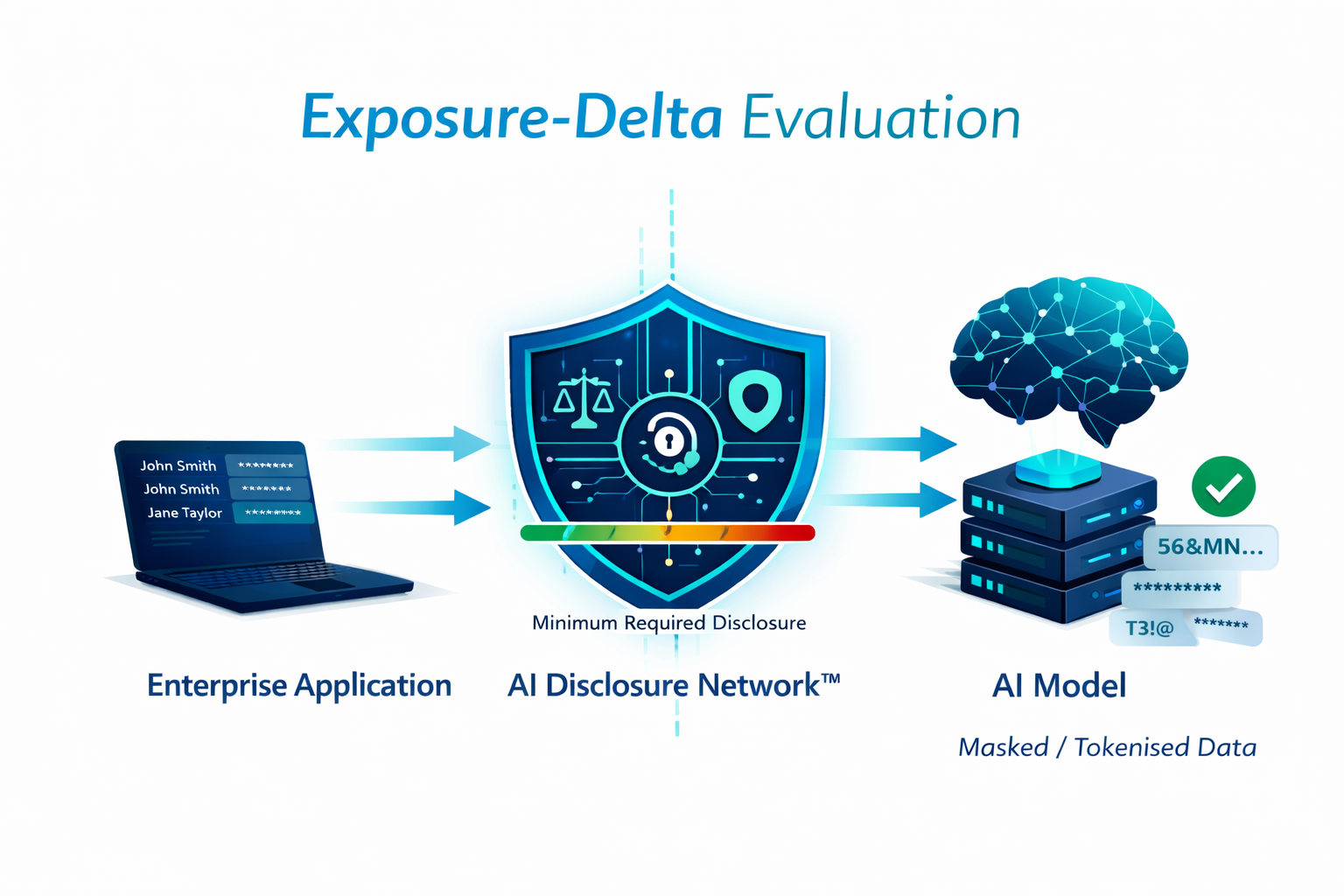

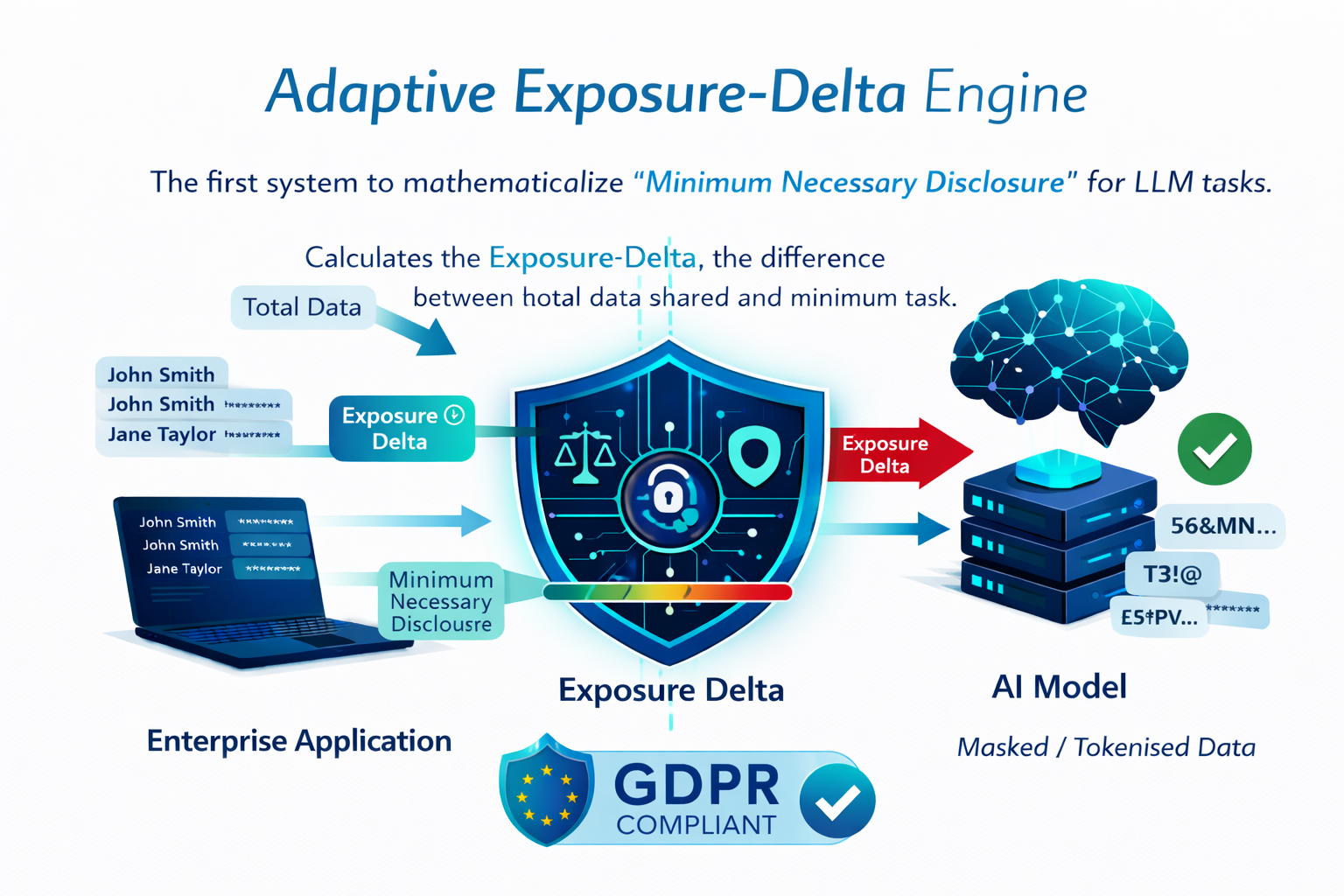

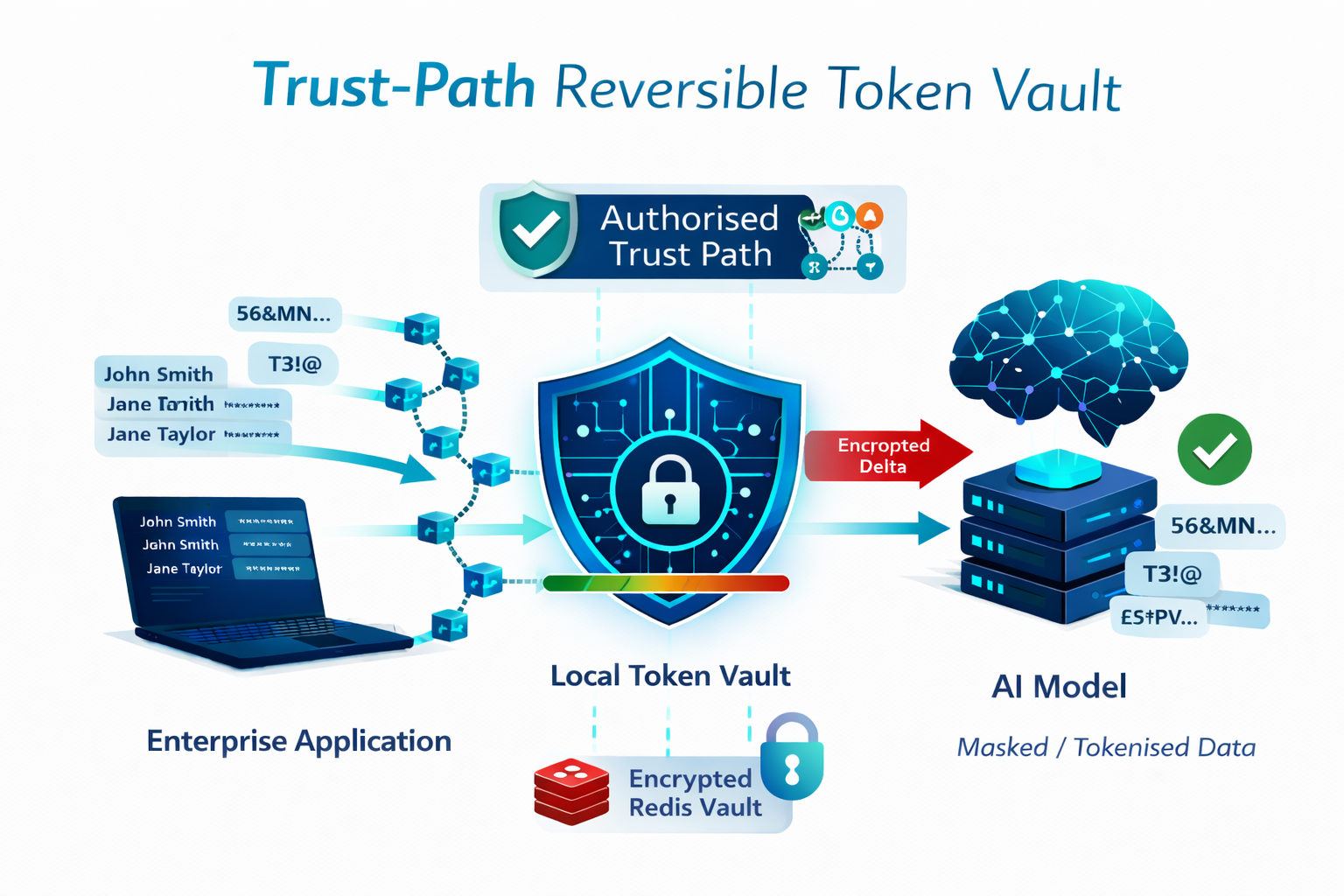

The Data (Use and Access) Act 2025 mandates "stringent safeguards" for automated processing, including data minimisation and tamper-evident audit evidence. Our platform operationalises data minimisation through automated Exposure-Delta evaluation, and provides the immutable hash-chained interaction ledger required for the "Right to Explanation" under DUAA and UK GDPR. For NHS clients, the Reversible Token Vaulting mechanism ensures Patient Identifiable Data (PID) is never exposed to external models during administrative automation, directly satisfying NHS DTAC Clinical Safety (DCB0129) and Data Protection requirements.

Is the Reversible Token Vaulting system truly capable of full data restoration?

+

Yes. Re-hydration testing at TRL 7 confirmed 100% accuracy in restoring tokenised data when Trust-Path variables are correctly aligned. This distinguishes our approach from one-way redaction (used by competitors like Private AI and Nightfall AI), which permanently destroys data utility. Our system allows the LLM to process logical relationships and complete complex drafting tasks using tokenised placeholders — then restores the original values for the end-user via the approved internal endpoint. This preserves full AI utility for solicitor-client drafting, clinical documentation, and financial report generation.

What is the technology readiness level and current development status?

+

AI Disclosure Network™ is at TRL 7 System Prototype Demonstration in Operational Environment. The functional prototype integrates all five core architectural elements: the Redaction Proxy Gateway (FastAPI + Go), Trust-Path Logic Engine, Dual-Channel Structural Formatter, Immutable Event Ledger (alpha), and Edge-Inference Privacy Layer. Validated performance metrics include 99.2% injection mitigation, 96.4% F1-score in entity detection, sub-150ms latency, and 100% re-hydration fidelity. We are nearing TRL 8 with Kubernetes migration to Azure UK South, OpenTelemetry API harmonisation, and UKIPO patent filings underway.